make sure your have model.tflite in Downloads folder it seems like it could not find the file

thanks,u have solve my many question ,but I m still have something need to resovle. Now I want to try edsr with quantization aware training to pratice. I inplement unsample :

-----------------------------------------------------------------------------------------------------------------------------------

def upsample(x, num_filters):

x = Conv2D(num_filters * (3 ** 2), 3, padding='same')(x)

Depth2Space = tf.keras.layers.Lambda(lambda x: tf.nn.depth_to_space(x, 3))

x = tfmot.quantization.keras.quantize_annotate_layer(Depth2Space,

quantize_config=default_8bit_quantize_configs.NoOpQuantizeConfig())(x)

return x

--------------------------------------------------------------------------------------------------------------------------------------

Is it right? after train section , I use

--------------------------------------------------------------------------------------------------------------------------------------

_, pretrained_weights = tempfile.mkstemp('.tf')

model.save_weights(pretrained_weights)

base_model = edsr()

base_model.load_weights(pretrained_weights) # optional but recommended for model accuracy

quant_aware_model = tfmot.quantization.keras.quantize_model(base_model)

# Save or checkpoint the model.

_, keras_model_file = tempfile.mkstemp('.h5')

quant_aware_model.save(keras_model_file)

# `quantize_scope` is needed for deserializing HDF5 models.

with tfmot.quantization.keras.quantize_scope():

loaded_model = tf.keras.models.load_model(keras_model_file)

----------------------------------------------------------------------------------------------------------------------------------------

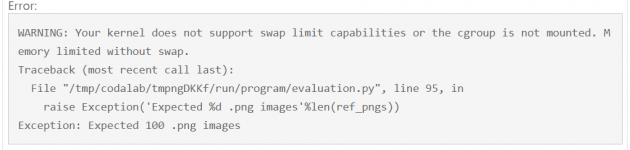

but tell me error :

ValueError: Unable to clone model. This generally happens if you used custom Keras layers or objects in your model. Please specify them via `quantize_scope` for your calls to `quantize_model` and `quantize_apply`.

I m going to collapse ,because have no template in github 0.0