All questions related to Real-Time Image Super-Resolution Challenge can be asked in this thread.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Real-Time Image Super-Resolution Challenge

- Thread starter Andrey Ignatov

- Start date

When I was testing I ran into the following problem:

NN API returned error ANEURALNETWOORKS_BAD_DATA at line 1968 while setting new operand value for tensor 'model/lambda_2/clip_by_value/Minimum/y;StatefulPartitionedCall/model/lambda_2/clip_by_value/Minimum/y'.

Tensorflow version 2.4.1

Some questions:

1、How it can be happened?

2、Does the clip operation need to be inside the model? I see it is not in within the demo https://github.com/aiff22/MAI-2021-Workshop/blob/main/fsrcnn_quantization/fsrcnn.py

NN API returned error ANEURALNETWOORKS_BAD_DATA at line 1968 while setting new operand value for tensor 'model/lambda_2/clip_by_value/Minimum/y;StatefulPartitionedCall/model/lambda_2/clip_by_value/Minimum/y'.

Tensorflow version 2.4.1

Some questions:

1、How it can be happened?

2、Does the clip operation need to be inside the model? I see it is not in within the demo https://github.com/aiff22/MAI-2021-Workshop/blob/main/fsrcnn_quantization/fsrcnn.py

NN API returned error ANEURALNETWOORKS_BAD_DATA

clip_by_value

NNAPI does not support clip_by_value op, thus you should remove it prior to model conversion.

Dear organizer:

We found that last year's winning solution was tested very slowly on our smartphones. Therefore, it is difficult to trade off speed and accuracy only on our own mobile phones. If we can get the speed results of the target evaluation platform, we can better optimize our model.

We want to know when and how we can get speed results through remote testing on the target evaluation platform

Thank you. I would be grateful if you could reply as soon as possible

Lawrence

2022.6.3

We found that last year's winning solution was tested very slowly on our smartphones. Therefore, it is difficult to trade off speed and accuracy only on our own mobile phones. If we can get the speed results of the target evaluation platform, we can better optimize our model.

We want to know when and how we can get speed results through remote testing on the target evaluation platform

Thank you. I would be grateful if you could reply as soon as possible

Lawrence

2022.6.3

mrblue3325

New member

Hi LishenWhen I was testing I ran into the following problem:

NN API returned error ANEURALNETWOORKS_BAD_DATA at line 1968 while setting new operand value for tensor 'model/lambda_2/clip_by_value/Minimum/y;StatefulPartitionedCall/model/lambda_2/clip_by_value/Minimum/y'.

Tensorflow version 2.4.1

Some questions:

1、How it can be happened?

2、Does the clip operation need to be inside the model? I see it is not in within the demo https://github.com/aiff22/MAI-2021-Workshop/blob/main/fsrcnn_quantization/fsrcnn.py

May I know that do you use the demo https://github.com/aiff22/MAI-2021-Workshop/blob/main/fsrcnn_quantization/fsrcnn.py to develop?

I notice that the quantization part in that demo for the input size is fixed (TFLITE_MODEL_INPUT_SHAPE = [1, 360, 640, 1])?

Do you know how to change it to TFLITE_MODEL_INPUT_SHAPE = [1, None, None, 1], so as to fulfill the submission requirement?

As I heard that tflite not really support for dynamic input very friendly.

Thanks.

Ssakura-go

New member

Hi, mrblue3325Hi Lishen

May I know that do you use the demo https://github.com/aiff22/MAI-2021-Workshop/blob/main/fsrcnn_quantization/fsrcnn.py to develop?

I notice that the quantization part in that demo for the input size is fixed (TFLITE_MODEL_INPUT_SHAPE = [1, 360, 640, 1])?

Do you know how to change it to TFLITE_MODEL_INPUT_SHAPE = [1, None, None, 1], so as to fulfill the submission requirement?

As I heard that tflite not really support for dynamic input very friendly.

Thanks.

Can you find a friendly testing code for Mobile Image Super Resolution? If you have solved the problem, could you please share the code with me? (I'd like to test on pytorch either.)

Thanks.

Well, the website has shown https://codalab.lisn.upsaclay.fr/competitions/1755#participate that, the input channel size should be 3. And try to set experimental_new_converter to True.Hi Lishen

May I know that do you use the demo https://github.com/aiff22/MAI-2021-Workshop/blob/main/fsrcnn_quantization/fsrcnn.py to develop?

I notice that the quantization part in that demo for the input size is fixed (TFLITE_MODEL_INPUT_SHAPE = [1, 360, 640, 1])?

Do you know how to change it to TFLITE_MODEL_INPUT_SHAPE = [1, None, None, 1], so as to fulfill the submission requirement?

As I heard that tflite not really support for dynamic input very friendly.

Thanks.

Ssakura-go

New member

Understood~ THANKS~Well, the website has shown https://codalab.lisn.upsaclay.fr/competitions/1755#participate that, the input channel size should be 3. And try to set experimental_new_converter to True.

Hello,

I have a question that, what is the submission form in this (validation) phase?

I had thought I should turn in (with readme.txt) 100 .png images, which are inference results for validation set.

But it has a size more than 200MB, which is bigger than the restriction 30MB, so that I couldn't turn it in.

Thank you.

Seongmin

I have a question that, what is the submission form in this (validation) phase?

I had thought I should turn in (with readme.txt) 100 .png images, which are inference results for validation set.

But it has a size more than 200MB, which is bigger than the restriction 30MB, so that I couldn't turn it in.

Thank you.

Seongmin

mrblue3325

New member

Do you mean the 100 inference results are SRed images?Hello,

I have a question that, what is the submission form in this (validation) phase?

I had thought I should turn in (with readme.txt) 100 .png images, which are inference results for validation set.

But it has a size more than 200MB, which is bigger than the restriction 30MB, so that I couldn't turn it in.

Thank you.

Seongmin

I have the same problem.

Right.Do you mean the 100 inference results are SRed images?

I have the same problem.

Dear Andrey Ignatov,NNAPI does not support clip_by_value op, thus you should remove it prior to model conversion.

Do you have a specific timetable for the opening of the target remotely evaluation platform ? I see that the Track Two has just been updated。

Looking forward your reply.

Best,

which device has Synaptics VS680?

may i know, if there are any updates on the benchmarking functionality?Hello,

Any updates about the possibility of measuring latency using target platform?

Maciej

mrblue3325

New member

Hello,Right.

Do you find the way to upload the sr images?

hello,

If clip op cannot be inside the model, I have two questions,

1. So when we submit the model, how will the official code to verify the PSNR?

2.whether to keep the same model when verifying PSNR and Runtime? what if the operation is not supported by the SoC, but can successfully verify PSNR.

If clip op cannot be inside the model, I have two questions,

1. So when we submit the model, how will the official code to verify the PSNR?

2.whether to keep the same model when verifying PSNR and Runtime? what if the operation is not supported by the SoC, but can successfully verify PSNR.

mrblue3325

New member

Hello,hello,

If clip op cannot be inside the model, I have two questions,

1. So when we submit the model, how will the official code to verify the PSNR?

2.whether to keep the same model when verifying PSNR and Runtime? what if the operation is not supported by the SoC, but can successfully verify PSNR.hell

May I know how can I upload the sr images, the whole zip is almost 300MB?

Also, the name of the image should be 801x3.png or 801.png?

Thanks.

neither, format like “0801.png" is ok. and remember to add "readme.txt" and model.tflite in your zip file.Hello,

May I know how can I upload the sr images, the whole zip is almost 300MB?

Also, the name of the image should be 801x3.png or 801.png?

Thanks.

mrblue3325

New member

Thanks.neither, format like “0801.png" is ok. and remember to add "readme.txt" and model.tflite in your zip file.

But I notice that the online system has the 30MB upload limitation.

How can u submit the full size sr images, which cost 300MB?

Hello,

I have some questions about the testing of running tflite model on the smartphone (AI Benchmark).

Specifically, the converted 'float32' tflite model works fine on all acceleration modes, but I find that the 'Int8' model performs much slower when I test it on NNAPI. As below:

I use the following code to generate the tflite model:

Do you have any ideas?

I have some questions about the testing of running tflite model on the smartphone (AI Benchmark).

Specifically, the converted 'float32' tflite model works fine on all acceleration modes, but I find that the 'Int8' model performs much slower when I test it on NNAPI. As below:

Quantization | CPU (ms) | GPU (ms) | NNAPI (ms) |

Float32 | 381 | 116 | 110 |

Int8 | 158 | 56 | 4794 (too slow) |

I use the following code to generate the tflite model:

Python:

input_shape = [1, 360, 640, 3]

model_path = '.ckpt/model'

model = tf.saved_model.load(model_path)

concrete_func = model.signatures[tf.saved_model.DEFAULT_SERVING_SIGNATURE_DEF_KEY]

concrete_func.inputs[0].set_shape(input_shape)

converter = tf.lite.TFLiteConverter.from_concrete_functions([concrete_func])

converter.experimental_new_converter = True

converter.experimental_new_quantizer = True

converter.optimizations = [tf.lite.Optimize.DEFAULT]

converter.representative_dataset = representative_dataset_gen

converter.target_spec.supported_ops = [tf.lite.OpsSet.TFLITE_BUILTINS_INT8]

converter.inference_input_type = tf.uint8

converter.inference_output_type = tf.uint8

tflite_model = converter.convert()

open("tflite/{}.tflite".format(name), "wb").write(tflite_model)Do you have any ideas?

Hello,I want to know where to get the timing report. Thanks for your reply!The good news is the timing report is published, but does anyone know how to find out our submission id?

you need to submit your results, and the report is here: https://github.com/mdenna-synaptics/codalab2022/blob/main/report.jsonHello,I want to know where to get the timing report. Thanks for your reply!

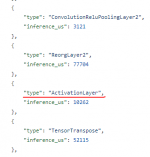

however, i have few doubts. it seems that the same layers latency vary a lot between submissions. moreover, for a majority of submissions the Transpose layer latency is larger, than full model latency...

Thanks too much!you need to submit your results, and the report is here: https://github.com/mdenna-synaptics/codalab2022/blob/main/report.json

however, i have few doubts. it seems that the same layers latency vary a lot between submissions. moreover, for a majority of submissions the Transpose layer latency is larger, than full model latency...

mrblue3325

New member

Hello, for the submission I got a problem.you need to submit your results, and the report is here: https://github.com/mdenna-synaptics/codalab2022/blob/main/report.json

however, i have few doubts. it seems that the same layers latency vary a lot between submissions. moreover, for a majority of submissions the Transpose layer latency is larger, than full model latency...

I notice that the online system has 30MB upload limitation.

How can u submit the full size sr images, which cost 300MB?

i have the same questionHello, for the submission I got a problem.

I notice that the online system has 30MB upload limitation.

How can u submit the full size sr images, which cost 300MB?

I notice that the online system has 30MB upload limitation.

How can u submit the full size sr images, which cost 300MB?

The max file size limit was increased to 500MB.

Can we add other datasets to train the model, such as Flickr2K?

Yes, you can use any other datasets for training your models.

The good news is the timing report is published, but does anyone know how to find out our submission id?

The published runtime results now include your usernames and submission dates.

mrblue3325

New member

Thanks for reply.The max file size limit was increased to 500MB.

Yes, you can use any other datasets for training your models.

The published runtime results now include your usernames and submission dates.

Is that the PSNR value are measured based on the uploaded 100 sr images or influenced once again from the tflite online?

Also, is that the standard range of input images for the model should be 0,255 instead of 0,1?

When I submit the zip file, there is an error.

WARNING: Your kernel does not support swap limit capabilities or the cgroup is not mounted. Memory limited without swap.

Traceback (most recent call last):

File "/tmp/codalab/tmpNCzOv2/run/program/evaluation.py", line 95, in

raise Exception('Expected %d .png images'%len(ref_pngs))

Exception: Expected 100 .png images

How can I solve it?

Thanks.

Last edited:

mrblue3325

New member

May I know how you guys upload the zip?

I upload 100 sr images from 801 to 900.png with readme and tflite.

However, I get an error every time.

I upload 100 sr images from 801 to 900.png with readme and tflite.

However, I get an error every time.

mrblue3325

New member

Do you uplaod the 100 sr pngs and run the evaluation by the platform successfully?i have the same question

I always have this error.

WARNING: Your kernel does not support swap limit capabilities or the cgroup is not mounted. Memory limited without swap.

Traceback (most recent call last):

File "/tmp/codalab/tmpNCzOv2/run/program/evaluation.py", line 95, in

raise Exception('Expected %d .png images'%len(ref_pngs))

Exception: Expected 100 .png images

In profiling report from here, total inference latency is not equal to sum of latencies from each layers. Some of layers has really high number regardless of type of operation, but total latency is too low sometimes.

In the competition, how would it be counted? Would it be sum of latencies from layers, or do we need to care about total latency?

In the competition, how would it be counted? Would it be sum of latencies from layers, or do we need to care about total latency?

Hi, @Andrey Ignatov , I find 'model_runtime.tflite' hasn't been mentioned above. Does 'model_runtime.tflite' here means 'model.tflite' with the input size [1,360,640,3]?

mrblue3325

New member

Yes I do think so, since 360,640 is for testing speed.View attachment 75

Hi, @Andrey Ignatov , I find 'model_runtime.tflite' hasn't been mentioned above. Does 'model_runtime.tflite' here means 'model.tflite' with the input size [1,360,640,3]?

Congrats. I saw u achieved a nice score.

May I know how you submit the zip file?

Do you zip the 100 sr images naming 0801.png.etc, and the file is like over 300MB right?

Because I always get an error during the submission and am frustrated about that.

File "/tmp/codalab/tmpNCzOv2/run/program/evaluation.py", line 95, in

raise Exception('Expected %d .png images'%len(ref_pngs))

Exception: Expected 100 .png images

Yes, I just put 100 SR images, tflite model, readme.txt in the root path and zip them, the file size is OKYes I do think so, since 360,640 is for testing speed.

Congrats. I saw u achieved a nice score.

May I know how you submit the zip file?

Do you zip the 100 sr images naming 0801.png.etc, and the file is like over 300MB right?

Because I always get an error during the submission and am frustrated about that.

File "/tmp/codalab/tmpNCzOv2/run/program/evaluation.py", line 95, in

raise Exception('Expected %d .png images'%len(ref_pngs))

Exception: Expected 100 .png images

mrblue3325

New member

HelloThe max file size limit was increased to 500MB.

Yes, you can use any other datasets for training your models.

The published runtime results now include your usernames and submission dates.

May I know whether in the final official influence code contain an np.clip function for the output or not?

If not, we need the clip layer in our model, and this cost us more 8ms in total.

It may be better to clarify it as it may signifianct affect the runtime.

Thanks a lot.

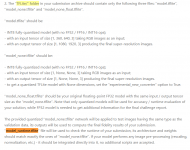

Do you use any operation after reorglayers?Hello

I have a question that, my timing results have an ActivationLayer after the ReorgLayer2. However, the TFLite model I have submitted should not have an ActivationLayer. Which operation does this ActivationLayer come from?

Thank you.

View attachment 78

mrblue3325

New member

I have the same questions.Do you use any operation after reorglayers?

Is that refer to the clip layer (which act as minimum and relu layer after quantised)?

That's why I asked whether the final official influence code contain an np.clip function for the output or not?

If yes, we can remove the clip layer and save the time.

hello, do you have this problem when you remove clip layer: https://github.com/mdenna-synaptics/codalab2022/issues/8 https://github.com/mdenna-synaptics/codalab2022/issues/9I have the same questions.

Is that refer to the clip layer (which act as minimum and relu layer after quantised)?

That's why I asked whether the final official influence code contain an np.clip function for the output or not?

If yes, we can remove the clip layer and save the time.

I have this problem, so I'm worried that the sponsor won't help me multiply rescale during the final test phase.

this activation after Reorg is simply Clip (which is not included in the final code - based on the previous competition results).

test phase already started, but the input images are not available. when can we expect them to be download ready? https://codalab.lisn.upsaclay.fr/competitions/1755#participate-get_data

@Andrey Ignatov

test phase already started, but the input images are not available. when can we expect them to be download ready? https://codalab.lisn.upsaclay.fr/competitions/1755#participate-get_data

@Andrey Ignatov

Do you mean that the organizer will not help us perform the clip operation? I don't understand this.which is not included in the final code

Last year's best player (ABPN), there is a clip operator in the code. Code in: https://github.com/NJU-Jet/SR_Mobil...419f1cb026ec041b/solvers/networks/base7.py#L9

(Sorry, I'm not proficient in English. I hope you can understand it and discuss it together)

what i mean is that you need to include this clip operation in the model (similarly to the code you shared)Do you mean that the organizer will not help us perform the clip operation? I don't understand this.

Last year's best player (ABPN), there is a clip operator in the code. Code in: https://github.com/NJU-Jet/SR_Mobil...419f1cb026ec041b/solvers/networks/base7.py#L9

(Sorry, I'm not proficient in English. I hope you can understand it and discuss it together)

Thank you for your reply. I agree with you.what i mean is that you need to include this clip operation in the model (similarly to the code you shared)

Yes.Hi, @Andrey Ignatov , I find 'model_runtime.tflite' hasn't been mentioned above. Does 'model_runtime.tflite' here means 'model.tflite' with the input size [1,360,640,3]?

Only the total latency is taken into account.In the competition, how would it be counted? Would it be sum of latencies from layers, or do we need to care about total latency?

Yes.If not, we need the clip layer in our model, and this cost us more 8ms in total.

Yes.Is it still possible to measure the speed during the test phase?

@Andrey Ignatov - inside the factsheet template, there is an information about requirement of providing all codes or executable. what is meant by 'executable' here? is it a tflite file?