operator45

New member

Phone Model: Samsung Galaxy S21

APP version: 5.0.3. I also tried the nightly version (24.12.2022).

TF version: 2.10, tried 2.12 and 2.5 as well

I can run the model on CPU without any errors. However, once I choose the TFLite GPU Delegate, I am getting this error:

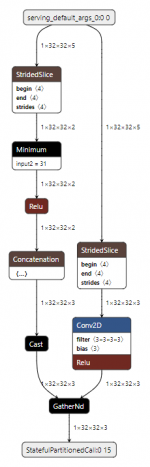

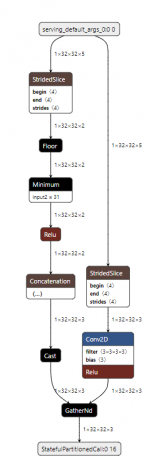

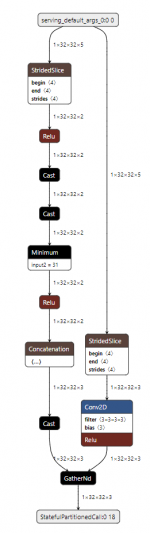

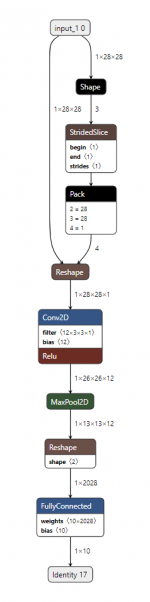

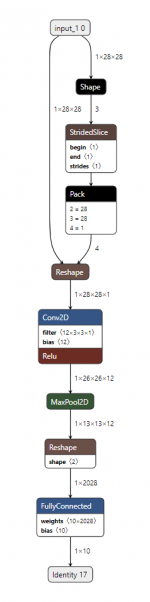

The model consists of just conv2d, split, add and floor ops.

I also used the TFLite Analyzer to check and it says that the model is compatible with the GPU delegate.

Here is the link for the tflite model and the code to reproduce: https://drive.google.com/drive/folders/10DyxR6JfBn2PM8-S6JIT_MidOCQxoxzw?usp=share_link

I can run some other complex models, that I have downloaded (not converted by me) without any problems on GPU. So seems like there is an issue with conversion on my end. Do you have any suggestions to which TF version I should use? What about the conversion parameters?

When I follow the official MNIST tutorial, it fails to run on GPU as well: https://www.tensorflow.org/lite/performance/post_training_float16_quant

APP version: 5.0.3. I also tried the nightly version (24.12.2022).

TF version: 2.10, tried 2.12 and 2.5 as well

I can run the model on CPU without any errors. However, once I choose the TFLite GPU Delegate, I am getting this error:

The model consists of just conv2d, split, add and floor ops.

I also used the TFLite Analyzer to check and it says that the model is compatible with the GPU delegate.

Here is the link for the tflite model and the code to reproduce: https://drive.google.com/drive/folders/10DyxR6JfBn2PM8-S6JIT_MidOCQxoxzw?usp=share_link

I can run some other complex models, that I have downloaded (not converted by me) without any problems on GPU. So seems like there is an issue with conversion on my end. Do you have any suggestions to which TF version I should use? What about the conversion parameters?

When I follow the official MNIST tutorial, it fails to run on GPU as well: https://www.tensorflow.org/lite/performance/post_training_float16_quant

Last edited: