All questions related to Learned Smartphone ISP Challenge can be asked in this thread.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Learned Smartphone ISP Challenge

- Thread starter Andrey Ignatov

- Start date

sayak

New member

The RAW validation images we are provided with are of 256x256 resolution. So, my question is what should be the size of the output RGB images? The competition homepage mentions it should be the same as the input size of the RAW images. But then again, the TFLite model's input size is different hence I am a bit confused.

Hi @sayak,

The provided raw 256x256px patches are used for checking PSNR / SSIM results of your model. However, when converting it to TFLite format, you should change the size of the input tensor to [1, 544, 960, 4] as is specified here.

No, here you can specify the latency obtained on your own smartphone.

Yes, the size of the outputs used for fidelity scores computation is 256x256px. As is mentioned in the challenge description, the size of the input / output tensors of the submitted TFLite model should be [1, 544, 960, 4] and [1, 1088, 1920, 3], respectively.

The RAW images provided to us are 256x256 which is different from what's expected in the final model. So, what should be done here? One immediate thing we could do is resize the RAW images. But I wanted to know from the organizers to get more systematic directions.

The provided raw 256x256px patches are used for checking PSNR / SSIM results of your model. However, when converting it to TFLite format, you should change the size of the input tensor to [1, 544, 960, 4] as is specified here.

Could anyone clarify as to which latency (CPU/GPU) is to be recorded in readme.txt? Do I run the TFLite model on my mobile phone or on MediaTek Dimensity 1000+ (APU)? If the latter, then what is the suggested method?

No, here you can specify the latency obtained on your own smartphone.

The RAW validation images we are provided with are of 256x256 resolution. So, my question is what should be the size of the output RGB images? The competition homepage mentions it should be the same as the input size of the RAW images. But then again, the TFLite model's input size is different hence I am a bit confused.

Yes, the size of the outputs used for fidelity scores computation is 256x256px. As is mentioned in the challenge description, the size of the input / output tensors of the submitted TFLite model should be [1, 544, 960, 4] and [1, 1088, 1920, 3], respectively.

sayak

New member

Thank you so much @Andrey Ignatov. This is really helpful.

During reporting the latency we are to report it on `[1, 544, 960. 4]` tensors, right? And we are free to use either mobile CPU or GPU?

During reporting the latency we are to report it on `[1, 544, 960. 4]` tensors, right? And we are free to use either mobile CPU or GPU?

Yes, that's correct.

sayak

New member

Hi folks.

I created a Colab Notebook in order to help the community get started with the provided scripts more quickly. It's now a part of the official baseline repository as well. I would be grateful if the Colab Notebook could be mentioned in the official channels.

I created a Colab Notebook in order to help the community get started with the provided scripts more quickly. It's now a part of the official baseline repository as well. I would be grateful if the Colab Notebook could be mentioned in the official channels.

sayak

New member

@Andrey Ignatov following your PyNet work I was wondering what would be the right level (5, 4,...,0) for exporting the frozen graph for the latter TFLite conversion.

Level 0 is the right one.what would be the right level (5, 4,...,0)

Hi @sayak,

Please create a new thread describing your issue here, and also add the following information:

After installing it on my phone (Realme Pro 2) it didn't open. Any clues?

Please create a new thread describing your issue here, and also add the following information:

- Device model id (as indicated in the settings, e.g., RMX1801)

- Android version (e.g., 8.1)

- When exactly this problem appears: right after starting the app, after the splash screen, after pressing the "Start AI Tests" button, etc.

I got the shape of the inputHi @sayak,

The provided raw 256x256px patches are used for checking PSNR / SSIM results of your model. However, when converting it to TFLite format, you should change the size of the input tensor to [1, 544, 960, 4] as is specified here.

No, here you can specify the latency obtained on your own smartphone.

Yes, the size of the outputs used for fidelity scores computation is 256x256px. As is mentioned in the challenge description, the size of the input / output tensors of the submitted TFLite model should be [1, 544, 960, 4] and [1, 1088, 1920, 3], respectively.

1. Does Input come in like the following?( as described in the sample code)

ch_B = raw[1::2, 1::2]

ch_Gb = raw[0::2, 1::2]

ch_R = raw[0::2, 0::2]

ch_Gr = raw[1::2, 0::2]

RAW_combined = np.dstack((ch_B, ch_Gb, ch_R, ch_Gr))

RAW_norm = RAW_combined.astype(np.float32) / (4 * 255)

2. Do output channels 0, 1 and 2 correspond to R, G and B? Should output be float32 or uint8?

sayak

New member

I serialized the output with integer data-type and kept the range to [0, 255]. Regarding your first question, this SO thread has some good explanations: https://stackoverflow.com/questions/58688633/how-to-convert-bayerrg8-format-image-to-rgb-image.

Hi @Minsu,

Almost correct - in this challenge, we are using 14-bit raw images instead of the 10-bit ones, therefore raw pixel values should be divided by 16 * 255 to map them to [0, 1] interval:

Yes, the output channels correspond to R, G and B. If you are submitting floating-point models, then the output should be float32., otherwise - uint8.

1. Does Input come in like the following?( as described in the sample code)

RAW_norm = RAW_combined.astype(np.float32) / (4 * 255)

Almost correct - in this challenge, we are using 14-bit raw images instead of the 10-bit ones, therefore raw pixel values should be divided by 16 * 255 to map them to [0, 1] interval:

RAW_norm = RAW_combined.astype(np.float32) / (16 * 255)Do output channels 0, 1 and 2 correspond to R, G and B? Should output be float32 or uint8?

Yes, the output channels correspond to R, G and B. If you are submitting floating-point models, then the output should be float32., otherwise - uint8.

sayak

New member

RAW_norm = RAW_combined.astype(np.float32) / (16 * 255)

I see. So, the baseline script could have been updated accordingly here. @Andrey Ignatov

bit more light on the core math to scale the pixel values when dealing with different bits of images

Pixel values of N-bit images are lying in the interval

[0, 2^N] (though the real interval is actually a bit smaller), therefore 10-bit images should be divided by ~2^10=1024, and 14-bit ones - by ~2^14=16384.sayak

New member

@Andrey Ignatov with this scaling, would this be affected as well (the final output of PyNet)?

Python:

output_l0 = tf.nn.tanh(conv_l0_out) * 0.58 + 0.5would this be affected as well (the final output of PyNet)?

No, please find a more detailed answer here.

Hi @sayak

There exists a message when dump the valid image.

"Lossy conversion from float32 to uint8. Range [-0.028580307960510254, 0.9456811547279358]. Convert image to uint8 prior to saving to suppress this warning"

Does it use the fp range [-0.028580307960510254~0.9456811547279358] mapping to [0~255] ?

I think the output enhanced_image need some processing to save the image.

Ex:

1. clip the range of enhanced_image to [0~1]

2. enhanced_image = int(enhanced_image * 255)

3. save the image

What do you think?

There exists a message when dump the valid image.

"Lossy conversion from float32 to uint8. Range [-0.028580307960510254, 0.9456811547279358]. Convert image to uint8 prior to saving to suppress this warning"

Does it use the fp range [-0.028580307960510254~0.9456811547279358] mapping to [0~255] ?

I think the output enhanced_image need some processing to save the image.

Ex:

1. clip the range of enhanced_image to [0~1]

2. enhanced_image = int(enhanced_image * 255)

3. save the image

What do you think?

sayak

New member

Yes, that should be the case. Cc: @tulasi1729

Hi @sayak,

The provided raw 256x256px patches are used for checking PSNR / SSIM results of your model. However, when converting it to TFLite format, you should change the size of the input tensor to [1, 544, 960, 4] as is specified here.

No, here you can specify the latency obtained on your own smartphone.

Yes, the size of the outputs used for fidelity scores computation is 256x256px. As is mentioned in the challenge description, the size of the input / output tensors of the submitted TFLite model should be [1, 544, 960, 4] and [1, 1088, 1920, 3], respectively.

Hi, @Andrey Ignatov

The PSNR is based on submit PNG image. Does we need to generate those PNG image based on TFlite file(if quantize to FP16 or UINT8)?

Does we need to generate those PNG image based on TFlite file(if quantize to FP16 or UINT8)?

The images should be generated using the obtained TFLite models. Even if you are submitting some other images now, during the final test phase we will be working only with your TFLite models for evaluating the quality of your solutions.

There is huge latency discrepancy between submission result and the result on my Redmi K30 Ultra.

I got 100~130ms on Redmi K30 Ultra using its GPU but the submission server told me the same tflite model took over 500ms. (And it is almost same with the time running on Redmi 30 Ultra CPU)

Redmi K30 Ultra certainly has MediaTek Dimensity 1000+ inside.

Is there any reason for this latency discrepancy or any error on running server?

I got 100~130ms on Redmi K30 Ultra using its GPU but the submission server told me the same tflite model took over 500ms. (And it is almost same with the time running on Redmi 30 Ultra CPU)

Redmi K30 Ultra certainly has MediaTek Dimensity 1000+ inside.

Is there any reason for this latency discrepancy or any error on running server?

Last edited:

on Redmi K30 Ultra using its GPU

You should run your model with NNAPI, not on GPU, as the first option is used for executing neural networks on the Dimensity 1000+ NPU.

Hi @sayak,

The provided raw 256x256px patches are used for checking PSNR / SSIM results of your model. However, when converting it to TFLite format, you should change the size of the input tensor to [1, 544, 960, 4] as is specified here.

No, here you can specify the latency obtained on your own smartphone.

Yes, the size of the outputs used for fidelity scores computation is 256x256px. As is mentioned in the challenge description, the size of the input / output tensors of the submitted TFLite model should be [1, 544, 960, 4] and [1, 1088, 1920, 3], respectively.

Hi, let me make sure I have it right. We need generate the "two" tflie file(output size are [1, 256, 265, 3] and [1, 1088, 1920, 3]) base on the same ckpt file, and than use '[1, 256, 265, 3]' tflite to generate the 256x256 RGB image. Finally summit the RGB image and [1, 1088, 1920, 3] tflite file.

If so, I have the questions on tflite quantization. As far as I know, the tflite_convert require the static input shape and representative_dataset for post-training integer quantization. We can use the 256*256 RAW to generate the quantize the '[1, 256, 265, 3]' tflite file, but how to generate the quantized '[1, 1088, 1920, 3]' tflite file (544*960 data missing) ? Sorry for being such a hassle.

Now, I just use the dummy data(random noist) to measure the latency on [1, 1088, 1920, 3] tflite file.Is the input to measure the latency dummy tensor or real raw data like provided training data?

And do you use variable tensors or only one fixed tensor to measure the latency??

But! Regarding your questions, maybe I am thinking too complicated. If want to quantize the model, i just need to use the dummy data to quantize the "[1, 1088, 1920, 3]" tflite file. And summit the RGB image(from the quantized '[1, 256, 265, 3]' tflite) and [1, 1088, 1920, 3] tflite file.

Dose the "[1, 1088, 1920, 3]" tflite file just for the latency measurement ? It wouldn't consider the raw to rgb conversion.

We need generate the "two" tflie file(output size are [1, 256, 265, 3] and [1, 1088, 1920, 3]) base on the same ckpt file, and than use '[1, 256, 265, 3]' tflite to generate the 256x256 RGB image. Finally summit the RGB image and [1, 1088, 1920, 3] tflite file.

Yes, exactly.

We can use the 256*256 RAW to generate the quantize the '[1, 256, 265, 3]' tflite file, but how to generate the quantized '[1, 1088, 1920, 3]' tflite file (544*960 data missing) ? Sorry for being such a hassle.

You can basically use any input images for quantizing the second model.

s the input to measure the latency dummy tensor or real raw data like provided training data?

We are using real data, though this has almost no effecting on the resulting runtime values.

And do you use variable tensors or only one fixed tensor to measure the latency?

Yes, the size of the input tensor should be fixed and equal to [1, 544, 960, 4].

Dose the "[1, 1088, 1920, 3]" tflite file just for the latency measurement ?

Yes.

Thanks for your answerYes, exactly.

You can basically use any input images for quantizing the second model.

We are using real data, though this has almost no effecting on the resulting runtime values.

Yes, the size of the input tensor should be fixed and equal to [1, 544, 960, 4].

Yes.

I want to clarify my question

The intend of the above question, "do you use variable tensors or only one fixed tensor to measure the latency?", was to ask if you measure the latency using only one real data or measure the latency using multiple real data and then average them.

Thanks in advance

Thanks for your reply, please let me reconfirm it.You can basically use any input images for quantizing the second model.

We can use the dummy data(random noise) to quantize the "[1, 1088, 1920, 3]" tflite file. Because it just for the latency measurement, but didn't consider image quality(PSNR measurement).

Thanks

Last edited:

f you measure the latency using only one real data or measure the latency using multiple real data and then average them.

Multiple data + averaging.

We can use the dummy data(random noise) to quantize the "[1, 1088, 1920, 3]" tflite file

Yes, that's correct.

Hi @Andrey Ignatov

Regarding the above discussion.

If we summit the image base on fp tflite for evaluating the quality, but generate the quantized tflite for latency measurement.

Is such a result acceptable?

Regarding the above discussion.

If we summit the image base on fp tflite for evaluating the quality, but generate the quantized tflite for latency measurement.

Is such a result acceptable?

If we summit the image base on fp tflite for evaluating the quality, but generate the quantized tflite for latency measurement.

Is such a result acceptable?

No! Please check this response.

INFO: Init

INFO: BuildGraph

INFO: AddOpsAndTensors

INFO: AddOpsAndTensors done

INFO: total_input_byte_size: 8355840

INFO: total_output_byte_size: 25067520

INFO: NeuronModel_identifyInputsAndOutputs

INFO: NeuronModel_relaxComputationFloat32toFloat16: 1

INFO: NeuronModel_finish

INFO: BuildGraph done

INFO: NeuronCompilation_setPreference: 1

INFO: NeuronCompilation_setPriority: 110

ERROR: Neuron returned error NEURON_UNMAPPABLE at line 778 while completing Neuron compilation.

ERROR: Node number 26 (TFLiteNeuronDelegate) failed to prepare.

ERROR: Restored original execution plan after delegate application failure.

Failed to apply NeuronDelegate delegate.

Benchmarking failed.

Traceback (most recent call last):

File "/tmp/codalab/tmp540dOQ/run/program/evaluation.py", line 178, in <module>

file = open(LOG_NAME, 'r')

IOError: [Errno 2] No such file or directory: '/tmp/codalab/tmp540dOQ/run/output/output.csv'

I got error as above when I submitted my model.

It's really hard to debug the error from above log as it seems to be related with TfLiteDelegate and first of all, this error does not occur on my Xiaomi Redmi - this time I run the model with NNAPI. Could you give me a help regarding the above error?

INFO: BuildGraph

INFO: AddOpsAndTensors

INFO: AddOpsAndTensors done

INFO: total_input_byte_size: 8355840

INFO: total_output_byte_size: 25067520

INFO: NeuronModel_identifyInputsAndOutputs

INFO: NeuronModel_relaxComputationFloat32toFloat16: 1

INFO: NeuronModel_finish

INFO: BuildGraph done

INFO: NeuronCompilation_setPreference: 1

INFO: NeuronCompilation_setPriority: 110

ERROR: Neuron returned error NEURON_UNMAPPABLE at line 778 while completing Neuron compilation.

ERROR: Node number 26 (TFLiteNeuronDelegate) failed to prepare.

ERROR: Restored original execution plan after delegate application failure.

Failed to apply NeuronDelegate delegate.

Benchmarking failed.

Traceback (most recent call last):

File "/tmp/codalab/tmp540dOQ/run/program/evaluation.py", line 178, in <module>

file = open(LOG_NAME, 'r')

IOError: [Errno 2] No such file or directory: '/tmp/codalab/tmp540dOQ/run/output/output.csv'

I got error as above when I submitted my model.

It's really hard to debug the error from above log as it seems to be related with TfLiteDelegate and first of all, this error does not occur on my Xiaomi Redmi - this time I run the model with NNAPI. Could you give me a help regarding the above error?

Could you give me a help regarding the above error?

You should try one of the following options:

1. Set the

experimental_new_converter option to False when generating the TFLite model. In this case, the old TOCO converter compatible with MediaTek's Neuron Delegate will be used instead of the new MLIR one.2. Convert your model using TensorFlow 1.15 (you can install it in a separate python / conda environment).

Hi @Andrey Ignatov

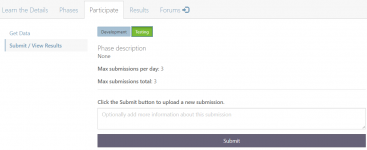

The same questions for me.

What's the structure of our submission for uploading at testing phase?

The same questions for me.

What's the structure of our submission for uploading at testing phase?

Thanks for your answerYou should try one of the following options:

1. Set theexperimental_new_converteroption toFalsewhen generating the TFLite model. In this case, the old TOCO converter compatible with MediaTek's Neuron Delegate will be used instead of the new MLIR one.

2. Convert your model using TensorFlow 1.15 (you can install it in a separate python / conda environment).

But, when I let experimental_new_converter option to be False, I got core dumped on your submitting server as following:

WARNING: Your kernel does not support swap limit capabilities or the cgroup is not mounted. Memory limited without swap.

* daemon not running; starting now at tcp:5037

* daemon started successfully

timeout: invalid time interval ‘/t’

Try 'timeout --help' for more information.

WARNING: linker: Warning: "/data/local/tmp/MAI_21/benchmark_model" unused DT entry: DT_RPATH (type 0xf arg 0x951) (ignoring)

INFO: Initialized TensorFlow Lite runtime.

Segmentation fault (core dumped)

Traceback (most recent call last):

File "/tmp/codalab/tmp0FSKOa/run/program/evaluation.py", line 178, in <module>

file = open(LOG_NAME, 'r')

IOError: [Errno 2] No such file or directory: '/tmp/codalab/tmp0FSKOa/run/output/output.csv'

Can you tell me how you made a evaluation server to reproduce these errors on my Redmi K30? Did you use aws-neuron? When I googled "NeuronCompilation" (appeared in error log) there is almost no information except aws-neuron

Further question, Is there any restriction for tflite footprint?? (~5MB is it okay?)

Last edited:

What's the structure of our submission for uploading at testing phase?

Check the email sent to all challenge participants yesterday.

Further question, Is there any restriction for tflite footprint?? (~5MB is it okay?)

Yes, this is fine. All models are tested by MediaTek using their Neuron Delegate. It is also present in the latest AI Benchmark app, but requires Android 11 which was not yet released for the Redmi K30.

If the first option fails, please try the second one with TF 1.15.

Thanks. It seems that Android 11 beta version was released for the Redmi K30. So, I will upgrade Android version and then try the latest AI Benchmark app. Then will it be same environment with your evaluation server??Check the email sent to all challenge participants yesterday.

Yes, this is fine. All models are tested by MediaTek using their Neuron Delegate. It is also present in the latest AI Benchmark app, but requires Android 11 which was not yet released for the Redmi K30.

If the first option fails, please try the second one with TF 1.15.

And also, my model is stuck in evaluation server, development stage (stuck in 'SUBMITTED' status for more than six hours). Is there any problem on the server or is it my problem?

Then will it be same environment with your evaluation server?

Yes, a very similar one, though the testing is done on MediaTek's dev devices and software.

stuck in 'SUBMITTED' status for more than six hours

Sometimes this happens when there are too many submissions, the results should be eventually released.

Thanks. @Andrey IgnatovCheck the email sent to all challenge participants yesterday.

About the message shows that:

"with an output tensor of size [1, 1088, 1920, 3] producing the final reconstructed RGB images.

......... no additional scripts are accepted."

Are there any constraint for range of the model output?

Now my output range is the 0~1, i need the post-processing(rescale to 0~255) to generate PNG image.

Is the post-processing in your test scripts ?

Now my output range is the 0~1, i need the post-processing(rescale to 0~255) to generate PNG image.

Yes, you just need to add one additional layer to your model multiplying the outputs by 255.

Will the organizer provide the test scripts or reference code for RGB img. producing?Yes, you just need to add one additional layer to your model multiplying the outputs by 255.

We want to make sure that the output results are consistent.

Will the organizer provide the test scripts or reference code for RGB img. producing?

Your model should not save the images - its outputs will be directly used to compute the final fidelity scores. The output format is the same as of the images you submitted to Codalab before.

Thanks for your answer, I want to clarify my questionYour model should not save the images - its outputs will be directly used to compute the final fidelity scores. The output format is the same as of the images you submitted to Codalab before.

1).Does the submitted tflite should be produce the INT8 value for fidelity scores computeing?

2).The round processing( [0~1]=>[0~255] ) will also be included in the runtime measurement.